Information

Data federation is a specialized architectural approach to data management that allows organizations to access and query data across multiple disparate sources as if they resided in a single, unified database. Unlike traditional data warehousing, which requires physically moving and transforming data into a central repository (ETL), data federation creates a virtualized layer that leaves the data in its original source. This “zero-copy” philosophy is becoming essential in a world where data is scattered across multi-cloud environments, legacy on-premises databases, and various SaaS applications.

As we navigate the complexities of modern data ecosystems, the ability to gain real-time insights without the latency of data movement is a significant competitive advantage. Data federation platforms provide a unified interface for BI tools and applications, handling the heavy lifting of joining tables from different systems—such as a SQL database, a NoSQL store, and a cloud bucket—on the fly. This approach not only reduces storage costs but also ensures that the data being analyzed is always the most current version available.

Best for: Data architects, engineers, and analysts in large-scale enterprises who need to break down data silos and provide immediate access to distributed data without the overhead of building massive, complex ETL pipelines.

Not ideal for: Organizations with very small, centralized datasets where a simple SQL database suffices, or for use cases requiring heavy, complex data transformations that are better handled by traditional batch processing.

Key Trends in Data Federation Platforms

- Zero-Copy Integration: The move toward “data staying where it lives” to reduce security risks and infrastructure costs associated with duplicating massive datasets.

- AI-Driven Query Optimization: Platforms are using machine learning to predict the most efficient way to pull data from multiple sources, minimizing network traffic and latency.

- Active Metadata Management: Automated discovery and cataloging of data sources, ensuring that the federation layer is always aware of schema changes in the underlying systems.

- Data Mesh Alignment: Federation is becoming the technical foundation for the Data Mesh architecture, enabling domain-oriented, decentralized data ownership.

- Multi-Cloud Sovereignty: Tools are focusing on joining data across different cloud providers (e.g., AWS, Azure, and Google Cloud) while maintaining strict regional compliance.

- Unified Governance and Security: Implementing a single security policy at the federation layer that propagates down to all connected sources, ensuring consistent access control.

- Real-Time API Generation: The ability to instantly turn a federated query into a REST or GraphQL API for developers to use in modern application builds.

- Computational Pushdown: Improving performance by “pushing” the processing logic down to the source database, rather than pulling all raw data into the federation engine.

How We Selected These Tools

- Query Performance and Latency: We prioritized platforms that demonstrate high-speed execution even when joining data across geographically dispersed sources.

- Breadth of Connectors: Evaluation was based on the tool’s ability to connect to a wide variety of sources, including SQL, NoSQL, Hadoop, and SaaS APIs.

- Ease of Virtualization: We looked for platforms that allow for the creation of virtual views with minimal coding or complex configuration.

- Security and Access Control: Priority was given to tools that offer robust, fine-grained security features like row-level and column-level masking.

- Scalability for Enterprise Workloads: Each tool was vetted for its ability to handle thousands of concurrent queries and petabytes of distributed data.

- Interoperability with BI Tools: The selection includes platforms that integrate seamlessly with standard analytics tools like Tableau, Power BI, and Looker.

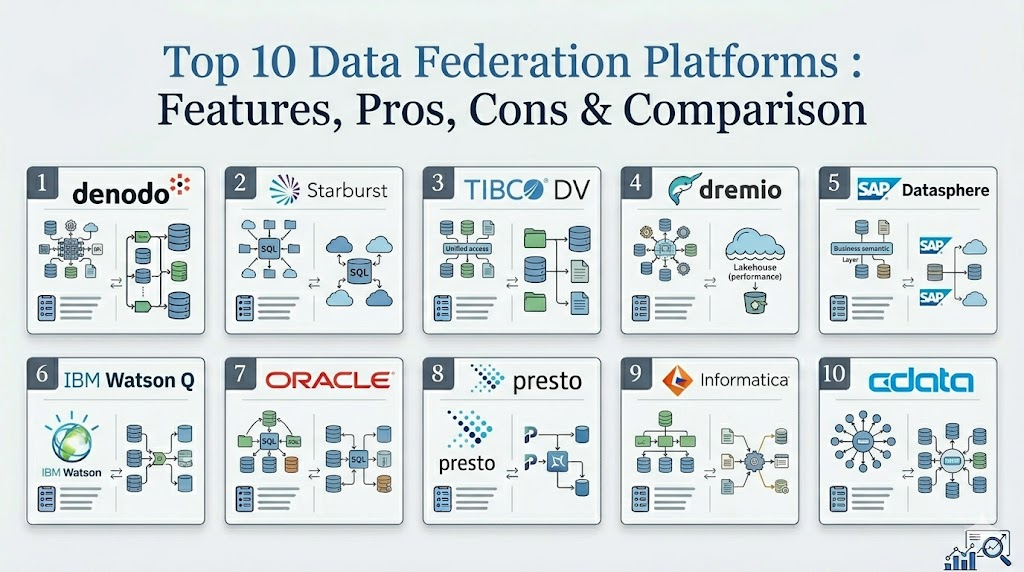

Top 10 Data Federation Platforms

1. Denodo Platform

Denodo is widely recognized as a leader in data virtualization and federation. It provides a high-performance abstraction layer that allows users to search, integrate, and share data from diverse sources through a single, unified interface.

Key Features

- Advanced query optimizer with dynamic query rewriting and pushdown capabilities.

- Integrated data catalog for easy discovery and self-service data access.

- Support for a massive range of connectors across cloud and on-premises systems.

- Automated data lineage and impact analysis for better governance.

- AI-powered recommendations to help users find relevant datasets.

Pros

- Exceptionals performance for real-time data integration across large enterprises.

- Very intuitive graphical interface for building complex virtual data models.

Cons

- Higher cost of entry compared to open-source alternatives.

- Requires specialized training to fully leverage the advanced optimization features.

Platforms / Deployment

Windows / Linux / AWS / Azure / Google Cloud

Hybrid

Security & Compliance

Role-based access control, SSO, and advanced data masking.

SOC 2 / GDPR compliant.

Integrations & Ecosystem

It acts as the central hub for the entire data stack, connecting to everything from legacy mainframes to modern Snowflake or Databricks environments.

Support & Community

Professional enterprise support with a dedicated global university for certification and training.

2. Starburst (Trino)

Based on the open-source Trino engine, Starburst is designed for high-speed, distributed SQL querying. It excels at federating data across massive data lakes and traditional databases simultaneously.

Key Features

- Distributed SQL query engine capable of running across thousands of nodes.

- Parallel connectors that pull data from multiple sources at once.

- Fine-grained security with built-in access control for sensitive data.

- High-speed connectors for S3, Hadoop, Snowflake, and SQL Server.

- Cost-based optimizer designed for complex, multi-source joins.

Pros

- Incredible speed for large-scale analytical queries across data lakes.

- Proven scalability used by some of the world’s largest technology companies.

Cons

- Management can be complex for teams without strong SQL and infrastructure skills.

- Heavy resource requirements for the underlying compute cluster.

Platforms / Deployment

Linux / Kubernetes / AWS / Azure / Google Cloud

Cloud / Hybrid

Security & Compliance

Integration with Apache Ranger and various identity providers.

Not publicly stated.

Integrations & Ecosystem

Strongest in the big data ecosystem, integrating deeply with Hive, Delta Lake, and Iceberg formats.

Support & Community

Backed by the creators of Trino with extensive enterprise support and a large open-source community.

3. TIBCO Data Virtualization

TIBCO provides a mature data federation solution that focuses on orchestrating data across the enterprise. It simplifies access to complex data silos and provides a consistent view for business users.

Key Features

- Centralized management console for designing and monitoring data services.

- Advanced caching mechanisms to improve performance for frequent queries.

- Automated discovery of relationship patterns across different databases.

- Extensive library of pre-built adapters for enterprise applications like SAP.

- Unified security model that applies across all federated sources.

Pros

- Excellent stability for mission-critical enterprise environments.

- Strong focus on data governance and compliance reporting.

Cons

- The user interface can feel dated compared to newer, cloud-native tools.

- Integration with some modern NoSQL sources can be less seamless.

Platforms / Deployment

Windows / Linux / Cloud

Hybrid

Security & Compliance

Enterprise-grade encryption and detailed audit logging.

Not publicly stated.

Integrations & Ecosystem

Integrates deeply with the TIBCO analytics suite and major ERP systems like SAP and Oracle.

Support & Community

Long-standing enterprise support structure with a global network of partners.

4. Dremio

Dremio is a “Data Lakehouse” platform that uses Apache Arrow to provide lightning-fast data federation. It is specifically designed to make data lakes feel and perform like a high-end relational database.

Key Features

- “Data Reflections” technology that uses pre-calculated materializations for speed.

- Direct SQL access to data stored in cloud object storage like S3 or ADLS.

- Semantic layer that allows users to build virtual datasets without code.

- Apache Arrow-based execution for high-speed data transfer.

- Git-like version control for data (Data-as-Code).

Pros

- Unbeatable performance for querying cloud object storage directly.

- Makes it very easy for non-technical users to build their own data views.

Cons

- Primarily focused on data lakes; federation with traditional SQL databases is a secondary focus.

- Requires significant memory resources to maintain its high-speed reflections.

Platforms / Deployment

Linux / Kubernetes / AWS / Azure / Google Cloud

Cloud / Hybrid

Security & Compliance

Integrated row-and-column level security and SSO.

Not publicly stated.

Integrations & Ecosystem

Strongest integration with Tableau and Power BI through optimized connectors.

Support & Community

Active community through Dremio University and strong professional support for enterprise clients.

5. SAP Datasphere

Formerly known as SAP Data Warehouse Cloud, Datasphere is a unified data fabric that federates data from SAP and non-SAP sources, providing a business-ready semantic layer.

Key Features

- Business-centric semantic modeling that preserves SAP business logic.

- Seamless federation between on-premises SAP HANA and cloud sources.

- Integrated data catalog and metadata management.

- Built-in data flow builder for light transformation during federation.

- Direct connectivity to hundreds of third-party cloud applications.

Pros

- The absolute best choice for organizations that rely on SAP ERP systems.

- Protects the integrity of complex SAP data structures during federation.

Cons

- Less cost-effective if SAP is not the primary data source.

- Requires an SAP-centric skill set for administration.

Platforms / Deployment

SAP BTP / Multi-cloud

Cloud

Security & Compliance

Enterprise-grade SAP security and compliance frameworks.

ISO 27001 / SOC 2 compliant.

Integrations & Ecosystem

Deeply tied to the SAP ecosystem but offers open connectors for external cloud databases.

Support & Community

Full SAP enterprise support and a massive global network of SAP consultants.

6. IBM Cloud Pak for Data (Watson Query)

Watson Query is IBM’s solution for data federation, allowing users to query data across multiple clouds and on-premises sources without data movement.

Key Features

- Constellation-based query engine that distributes work across multiple nodes.

- AI-driven automation for data discovery and cataloging.

- Integrated governance through IBM Knowledge Catalog.

- Simplified interface for joining tables across SQL and NoSQL sources.

- Support for hybrid and multi-cloud environments.

Pros

- Strong focus on data governance and automated metadata management.

- Excellent for large organizations with complex, heterogeneous data landscapes.

Cons

- The broader Cloud Pak platform can be complex to deploy and manage.

- Pricing can be high for smaller-scale use cases.

Platforms / Deployment

Red Hat OpenShift / AWS / Azure / IBM Cloud

Hybrid

Security & Compliance

Robust identity management and data protection via IBM security.

SOC 2 / HIPAA compliant.

Integrations & Ecosystem

Integrates with the full IBM AI and data suite, as well as major third-party databases.

Support & Community

Global IBM enterprise support with extensive documentation and training modules.

7. Oracle Big Data SQL

For organizations centered on the Oracle ecosystem, this platform allows users to query data in Hadoop, NoSQL, and Object Storage using standard Oracle SQL.

Key Features

- Single SQL dialect for querying Oracle DB, Hadoop, and S3.

- Smart Scan technology that pushes query processing to the data source.

- Unified security that extends Oracle’s security model to big data sources.

- Exadata-level performance optimizations for distributed queries.

- Automated metadata synchronization between Oracle and big data stores.

Pros

- Allows Oracle-skilled teams to query big data without learning new languages.

- Exceptional performance when integrated with Oracle hardware.

Cons

- Very Oracle-centric; less ideal for organizations moving away from Oracle.

- Licensing can be expensive and complex.

Platforms / Deployment

Linux / Oracle Cloud / Exadata

On-premises / Cloud

Security & Compliance

Oracle Advanced Security and Vault integration.

Not publicly stated.

Integrations & Ecosystem

Deeply tied to the Oracle Database and Big Data Appliance ecosystem.

Support & Community

Standard Oracle Premier Support and a massive network of database administrators.

8. Presto (PrestoDB / Presto Foundation)

Presto is the original open-source distributed SQL engine developed at Facebook. It remains a core technology for high-speed federation across heterogeneous data sources.

Key Features

- In-memory distributed query execution for low latency.

- Separation of compute and storage for independent scaling.

- Pluggable connector architecture for SQL, NoSQL, and Kafka.

- Support for standard ANSI SQL.

- Capable of querying exabytes of data across distributed systems.

Pros

- Proven at the largest scales of data in the world.

- Completely open-source with no vendor lock-in for the core engine.

Cons

- Requires significant technical expertise to manage and tune.

- Lacks the graphical management tools found in commercial platforms.

Platforms / Deployment

Linux / Kubernetes / Any Cloud

Local / Cloud

Security & Compliance

Customizable via plugins; supports Kerberos and LDAP.

Not publicly stated.

Integrations & Ecosystem

A massive ecosystem of connectors developed by the open-source community.

Support & Community

Supported by the Presto Foundation and a global community of engineers.

9. Informatica Data Virtualization

Informatica provides a data virtualization layer as part of its Intelligent Data Management Cloud (IDMC), focusing on creating a unified “data fabric” for the enterprise.

Key Features

- AI-powered data integration and discovery through the CLAIRE engine.

- Centralized governance and metadata management.

- Support for real-time data access across multi-cloud environments.

- Integrated data quality and masking features.

- No-code interface for building federated data services.

Pros

- The best choice for organizations already using Informatica for ETL or MDM.

- Strongest AI-driven automation features for data discovery.

Cons

- Can be overkill for organizations only needing simple data federation.

- Premium pricing reflects its position as a comprehensive enterprise suite.

Platforms / Deployment

Informatica Cloud (IDMC) / Multi-cloud

Cloud

Security & Compliance

Comprehensive enterprise security and data privacy features.

SOC 2 / HIPAA compliant.

Integrations & Ecosystem

Integrates perfectly with the rest of the Informatica cloud suite and major SaaS providers.

Support & Community

Tiered enterprise support with a very large community of data integration professionals.

10. CData Virtuality

CData Virtuality is a modern data virtualization and federation tool that focuses on high agility, allowing teams to connect and query data in minutes rather than weeks.

Key Features

- “Logical Data Warehouse” approach that combines federation and automation.

- High-speed connectors for over 200 different data sources.

- Automated materialization of frequently used data views.

- Integrated SQL editor for building virtual datasets.

- Support for both real-time federation and scheduled data movement.

Pros

- Extremely fast setup and time-to-value for smaller teams.

- Offers a unique hybrid approach of federation and automated data movement.

Cons

- Less established in massive-scale enterprises compared to Denodo.

- The administrative interface is less comprehensive than larger suites.

Platforms / Deployment

Windows / Linux / Cloud

Hybrid

Security & Compliance

Standard role-based access and encryption.

Not publicly stated.

Integrations & Ecosystem

Excellent connectivity to a wide range of modern SaaS applications and cloud APIs.

Support & Community

Responsive professional support and a growing network of data engineering partners.

Comparison Table

| Tool Name | Best For | Platform(s) Supported | Deployment | Standout Feature | Public Rating |

| 1. Denodo | Enterprise Fabric | Win, Linux, Multi-cloud | Hybrid | AI-Optimizer | N/A |

| 2. Starburst | Data Lake Querying | Linux, Kubernetes, Cloud | Cloud | Trino Engine | N/A |

| 3. TIBCO DV | Governance Focus | Win, Linux, Cloud | Hybrid | Advanced Caching | N/A |

| 4. Dremio | Lakehouse Speed | Linux, Kubernetes, Cloud | Cloud | Arrow Execution | N/A |

| 5. SAP Datasphere | SAP Environments | SAP BTP, Multi-cloud | Cloud | Semantic Layer | N/A |

| 6. IBM Watson Q | Hybrid Cloud | OpenShift, Multi-cloud | Hybrid | AI Governance | N/A |

| 7. Oracle BDSQL | Oracle Ecosystem | Linux, Oracle Cloud | Hybrid | Smart Scan | N/A |

| 8. Presto | Big Data Scale | Linux, Any Cloud | Local | Open Source | N/A |

| 9. Informatica | IDMC Customers | Informatica Cloud | Cloud | CLAIRE AI | N/A |

| 10. CData Virtuality | Rapid Deployment | Win, Linux, Cloud | Hybrid | Hybrid Engine | N/A |

Evaluation & Scoring

| Tool Name | Core (25%) | Ease (15%) | Integrations (15%) | Security (10%) | Perf (10%) | Support (10%) | Value (15%) | Total |

| 1. Denodo | 10 | 8 | 10 | 9 | 10 | 9 | 6 | 8.80 |

| 2. Starburst | 10 | 5 | 9 | 8 | 10 | 9 | 8 | 8.45 |

| 3. TIBCO DV | 9 | 7 | 9 | 9 | 8 | 8 | 7 | 8.15 |

| 4. Dremio | 9 | 8 | 8 | 8 | 10 | 8 | 8 | 8.55 |

| 5. SAP Datasphere | 8 | 7 | 9 | 9 | 8 | 9 | 6 | 7.85 |

| 6. IBM Watson Q | 9 | 6 | 9 | 9 | 8 | 8 | 6 | 7.75 |

| 7. Oracle BDSQL | 9 | 5 | 8 | 9 | 10 | 8 | 5 | 7.40 |

| 8. Presto | 9 | 4 | 9 | 6 | 10 | 6 | 10 | 7.75 |

| 9. Informatica | 9 | 7 | 10 | 9 | 8 | 9 | 6 | 8.20 |

| 10. CData Virtuality | 8 | 9 | 9 | 7 | 8 | 8 | 8 | 8.15 |

The scoring above is based on the platform’s ability to act as a robust, enterprise-grade federation engine. Denodo and Starburst lead the rankings because they offer the most advanced optimization and performance across the widest variety of sources. Dremio scores highly for its modern approach to cloud storage, while tools like Informatica and SAP Datasphere provide exceptional value for organizations already committed to their respective ecosystems. Open-source options like Presto offer the highest “Value” but require more investment in “Ease” and “Support.”

Which Data Federation Platform Is Right for You?

Solo / Freelancer

For individuals, a full federation platform is rarely needed. However, if you are building a small data project, Presto or Blender (the open-source tool) are the best ways to experiment with federation concepts without licensing costs.

SMB

Small to medium businesses should look for tools with fast setup and low administrative overhead. CData Virtuality is a strong contender here, as it offers a modern, simplified interface that allows a small team to get up and running quickly.

Mid-Market

For growing companies that need to join a mix of cloud SaaS apps and local databases, Dremio or Denodo (Professional Edition) provide a great balance of performance and ease of use, allowing the data team to scale without building complex ETL pipelines.

Enterprise

Large-scale organizations with complex regulatory needs should prioritize Denodo, IBM Cloud Pak for Data, or Informatica. These platforms offer the governance, security, and global support structures required for high-stakes enterprise environments.

Budget vs Premium

Presto and Starburst Galaxy (their cloud offering) are the go-to choices for performance-to-price. Denodo and TIBCO represent the premium tier, where you are paying for deep automation, a unified interface, and enterprise-grade peace of mind.

Feature Depth vs Ease of Use

Denodo provides the most depth in terms of query optimization and data cataloging. CData Virtuality and Dremio focus more on ease of use, making the data accessible to analysts through a simplified semantic layer.

Integrations & Scalability

If your data resides primarily in huge data lakes (S3/ADLS), Starburst and Dremio are the most scalable options. For organizations with hundreds of different specialized sources, Denodo offers the most comprehensive connector library.

Security & Compliance Needs

Organizations in healthcare or finance should look at IBM, SAP, or Informatica. These vendors have the most mature security frameworks and provide the detailed lineage and auditing reports necessary for passing strict compliance audits.

Frequently Asked Questions (FAQs)

1. What is the main difference between data federation and a data warehouse?

A data warehouse moves and stores data centrally, while federation leaves the data in its original source and queries it virtually in real-time.

2. Does data federation slow down the source database?

It can if queries are not optimized. Modern platforms use “pushdown” technology to run only the necessary logic on the source, minimizing the impact.

3. Do I still need ETL if I have a data federation platform?

Not always. Federation can replace many ETL tasks, but you may still need ETL for very complex historical data transformations or high-frequency batch processing.

4. Is data federation the same as data virtualization?

Data federation is a specific type of data virtualization. While virtualization covers the general abstraction layer, federation specifically refers to joining data across multiple sources.

5. How does a federation engine handle data security?

It creates a unified security layer. You define access rules in the federation platform, which then enforces them across all connected databases and files.

6. Can data federation work across different cloud providers?

Yes, this is one of its main strengths. You can run a single query that joins data from AWS S3, Azure SQL, and Google BigQuery.

7. What is “Pushdown Optimization”?

It is a technique where the federation engine sends the processing logic (like filtering or sorting) to the source database, so only the final results are sent over the network.

8. Does data federation provide real-time data?

Yes, because it queries the source directly, you are always seeing the most recent data available without waiting for an ETL sync.

9. Can non-technical users use these platforms?

Many platforms provide a “semantic layer” or data catalog that allows business users to find and use data through a simplified, no-code interface.

10. What are the common challenges of data federation?

Network latency between distant sources and the complexity of optimizing queries across different types of database engines are the two primary hurdles.

Conclusion

Data federation has emerged as a critical architecture for the modern, data-driven enterprise. By providing a unified virtual layer over distributed data, these platforms eliminate the friction of data movement and allow organizations to react to information in real-time. The choice between a high-performance engine like Starburst, a comprehensive fabric like Denodo, or an ecosystem-specific tool like SAP Datasphere depends on your existing infrastructure and the speed at which your business needs to move. As data continues to grow and fragment, the ability to federate will remain a cornerstone of a flexible and efficient data strategy.

Best Cardiac Hospitals Near You

Discover top heart hospitals, cardiology centers & cardiac care services by city.

Advanced Heart Care • Trusted Hospitals • Expert Teams

View Best Hospitals