Introduction

Stream processing frameworks help teams process data continuously as events arrive, instead of waiting for batch jobs. They can transform, enrich, aggregate, and route event streams in near real time, which enables faster decisions, automated actions, and more responsive products. In practical terms, these frameworks sit between event streaming platforms and downstream systems like databases, data lakes, analytics platforms, alerting tools, and applications.

This matters now because modern systems produce constant streams of user activity, payments, logs, sensors, and operational signals. Businesses want instant insight, and engineering teams want faster feedback loops and reliable pipelines. Real-world use cases include fraud detection and risk scoring, real-time personalization, streaming ETL into analytics systems, live monitoring and anomaly detection, IoT telemetry processing, and building event-driven microservices workflows.

When choosing a stream processing framework, buyers should evaluate processing guarantees (at-least-once, exactly-once patterns), state management, windowing capabilities, latency and throughput, fault tolerance, scalability, deployment options, integration with event streaming systems, developer experience, operational observability, and total cost of ownership.

Best for: data engineering teams, platform teams, backend engineers, SRE, security teams, and organizations running event-driven systems; industries like fintech, e-commerce, telecom, gaming, logistics, media, and IoT.

Not ideal for: teams that only need batch reports; organizations without clear event design and ownership; small pipelines where simple consumers are sufficient; cases where business logic is better handled inside application services rather than centralized stream processing.

Key Trends in Stream Processing Frameworks

- More teams are adopting unified batch plus streaming APIs to reduce cognitive overhead and reuse logic.

- Stateful processing is becoming the default, enabling richer real-time applications beyond simple filtering.

- Exactly-once style guarantees are increasingly expected, but teams still validate them in real deployments.

- Observability and operational tooling are becoming critical, including lag, state size, checkpoint health, and backpressure.

- Real-time feature pipelines for ML are growing, pushing stream processing closer to product delivery.

- Cloud-native deployments are rising, but many enterprises still need hybrid and self-hosted options.

- Interoperability is valued, including open connectors, event streaming compatibility, and reusable schema practices.

- Cost control is becoming a primary design factor due to always-on processing and state storage needs.

- More platforms are emphasizing “streaming SQL” to make streaming accessible to non-specialist teams.

- Reliability expectations are rising, especially around recovery time, scaling behavior, and upgrade safety.

How We Selected These Tools (Methodology)

- Selected frameworks that are widely recognized for production-grade stream processing.

- Prioritized strong stateful processing, windowing, and fault-tolerance capabilities.

- Included a balanced mix of open-source projects and managed cloud frameworks.

- Considered integration with popular event streaming platforms and data ecosystems.

- Looked at developer experience, ecosystem maturity, and availability of community knowledge.

- Considered operational patterns such as scaling, monitoring, checkpoints, and upgrade workflows.

- Included both general-purpose frameworks and streaming SQL options for different team needs.

- Avoided claims about compliance and certifications unless clearly known, using “Not publicly stated” or “Varies / N/A” where needed.

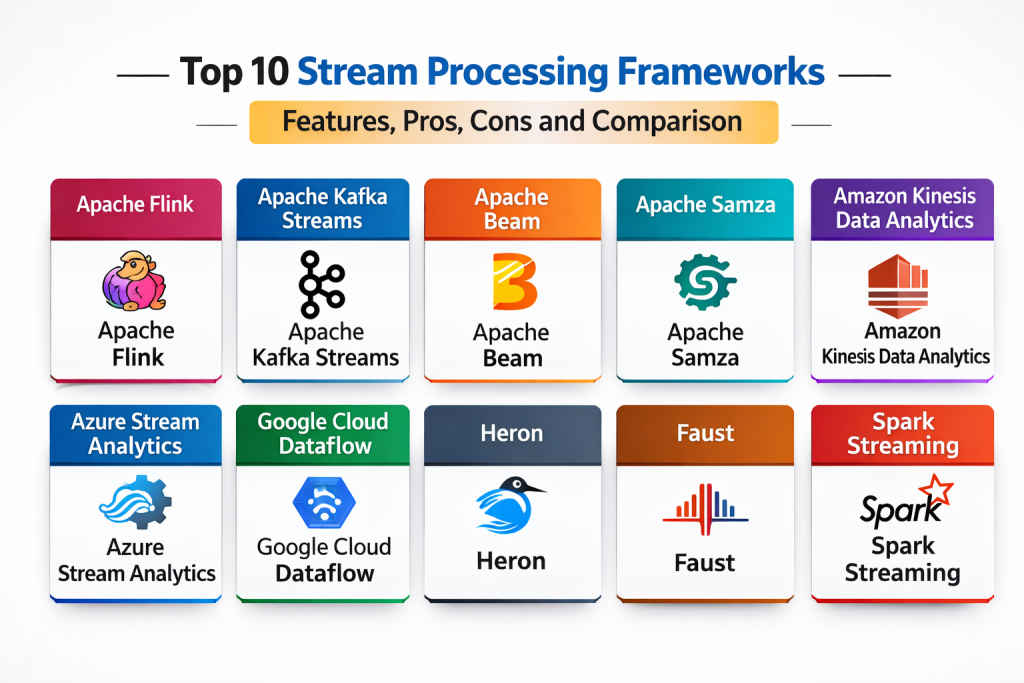

Top 10 Stream Processing Frameworks

Tool 1 — Apache Flink

Apache Flink is a widely used stateful stream processing framework designed for low-latency processing and complex event workflows. It is commonly chosen for large-scale streaming ETL, real-time analytics, and event-driven applications.

Key Features

- Stateful stream processing with fault tolerance

- Rich windowing, event-time processing, and watermark support

- Exactly-once style processing patterns (Varies by setup)

- Scalable checkpoints and state backends (Varies)

- Connectors for common streaming and storage systems (Varies)

- SQL and Table APIs for streaming analytics (Varies)

- Strong support for complex event processing patterns (Varies)

Pros

- Strong fit for complex, stateful streaming workloads

- Mature ecosystem and broad adoption

- Scales well for high-throughput pipelines

Cons

- Operational complexity can be high without experienced ownership

- Tuning state, checkpoints, and resources takes practice

- Developer experience varies depending on deployment model

Platforms / Deployment

- Linux (common)

- Self-hosted / Hybrid (Varies)

Security & Compliance

- Not publicly stated

Integrations & Ecosystem

Flink integrates with streaming platforms, data lakes, and analytics systems through a broad connector ecosystem.

- Connectors for event streaming systems (Varies)

- Integration with storage and lakehouse systems (Varies)

- SQL-based streaming patterns (Varies)

- APIs for custom sources and sinks

- Ecosystem tools for monitoring and operations (Varies)

Support & Community

Large open-source community, extensive documentation, and many real-world deployment examples.

Tool 2 — Apache Spark Structured Streaming

Apache Spark Structured Streaming brings streaming processing into the Spark ecosystem, enabling teams to write streaming pipelines using familiar Spark APIs. It is often chosen when teams already use Spark for batch processing and want a unified approach.

Key Features

- Unified batch and streaming programming model

- Micro-batch and continuous processing options (Varies)

- Windowing, aggregations, and state management (Varies)

- Integration with Spark ecosystem and storage systems (Varies)

- Structured APIs for transformations and joins (Varies)

- Operational tooling through Spark platform setups (Varies)

- Broad language support through Spark APIs

Pros

- Great for teams already invested in Spark

- Useful for “same logic for batch and streaming” workflows

- Strong ecosystem and wide adoption

Cons

- Latency depends on processing mode and configuration

- State management tuning can be complex at scale

- Operational stability depends on cluster management quality

Platforms / Deployment

- Linux (common) / Windows (Varies)

- Cloud / Self-hosted / Hybrid (Varies)

Security & Compliance

- Not publicly stated

Integrations & Ecosystem

Structured Streaming works well where Spark is already used for analytics, ETL, and data lake pipelines.

- Integration with event sources through connectors (Varies)

- Compatibility with common storage formats and systems (Varies)

- Integration with orchestration tools (Varies)

- APIs for custom processing and sinks

- Broad community tooling and best practices

Support & Community

Very large community and learning resources. Support depends on how Spark is deployed and managed.

Tool 3 — Apache Beam

Apache Beam provides a unified programming model for batch and stream processing, allowing pipelines to run on different execution engines. It is useful when teams want portability across runners and standardized pipeline definitions.

Key Features

- Unified model for batch and streaming pipelines

- Portable execution across multiple runners (Varies)

- Windowing and event-time processing semantics

- Stateful processing support (Varies)

- SDK support for multiple languages (Varies)

- Rich transforms library and pipeline composition

- Integration patterns through runner ecosystems (Varies)

Pros

- Strong portability and standardized pipeline definitions

- Good for teams that want vendor flexibility

- Rich windowing semantics for complex streaming logic

Cons

- Operational experience depends heavily on the chosen runner

- Debugging can be more complex across portability layers

- Performance characteristics vary by execution engine

Platforms / Deployment

- Varies / N/A (depends on runner)

- Cloud / Self-hosted / Hybrid (Varies)

Security & Compliance

- Not publicly stated

Integrations & Ecosystem

Beam integrates through runners and connectors, making it adaptable across different streaming ecosystems.

- Compatibility with multiple execution backends (Varies)

- Connectors via runner ecosystems (Varies)

- Libraries and SDK tools for pipeline development

- Monitoring and observability depend on runner (Varies)

- Integration with common stream sources (Varies)

Support & Community

Strong open-source community; many teams rely on runner-specific ecosystems for operations.

Tool 4 — Kafka Streams

Kafka Streams is a client library for building stream processing applications that run inside your services. It is commonly used when teams want stream processing tightly integrated with Kafka and prefer application-style deployment.

Key Features

- Embedded stream processing within application services

- Stateful processing with local state stores (Varies)

- Windowed aggregations and joins for event streams

- Exactly-once style semantics in supported workflows (Varies)

- Tight integration with Kafka topics and consumer groups

- Simple scaling through service instances

- Good fit for event-driven microservices patterns

Pros

- Simple deployment model for teams building microservices

- Strong Kafka integration and ecosystem alignment

- Good for real-time transformations and aggregations

Cons

- Best fit is Kafka-centric; portability is lower

- Operational visibility depends on application observability

- Complex pipelines can become harder to manage across many services

Platforms / Deployment

- Linux / Windows (Varies) / macOS (Varies)

- Cloud / Self-hosted / Hybrid (Varies)

Security & Compliance

- Not publicly stated

Integrations & Ecosystem

Kafka Streams integrates naturally with Kafka-based architectures and supports building real-time processing into product services.

- Kafka topic integration for sources and sinks

- Compatibility with schema governance tooling (Varies)

- Integration with monitoring stacks through application telemetry

- Works with connector ecosystems indirectly (Varies)

- Strong language ecosystem for Java-based stacks

Support & Community

Strong community due to Kafka adoption. Support depends on how your Kafka environment is operated.

Tool 5 — Apache Samza

Apache Samza is a stream processing framework designed for building real-time pipelines on top of messaging systems. It is often used for scalable stream processing with state and fault tolerance, especially in certain enterprise architectures.

Key Features

- Stream processing model with stateful operators (Varies)

- Integration with messaging systems for ingestion (Varies)

- Fault-tolerant processing and checkpoint patterns (Varies)

- Local state storage options (Varies)

- Scalable parallelism and partitioned processing

- Support for real-time ETL and event transformations

- Extensible APIs for custom integrations

Pros

- Good fit for stream pipelines with strong partition-based scaling

- Can be effective for reliable event transformations

- Extensible architecture for custom needs

Cons

- Smaller ecosystem compared to Flink and Spark

- Talent pool and community resources can be limited

- Operational patterns depend on deployment environment

Platforms / Deployment

- Linux (common)

- Self-hosted / Hybrid (Varies)

Security & Compliance

- Not publicly stated

Integrations & Ecosystem

Samza integrations depend on messaging and storage choices and are often tuned for specific enterprise patterns.

- Integration with event sources (Varies)

- Connectors and custom IO options (Varies)

- Compatibility with monitoring and ops tooling (Varies)

- Extensible APIs for sinks and processors

- Works well in partition-based data models

Support & Community

Community exists but is smaller than mainstream frameworks; support depends on internal expertise and selected deployment patterns.

Tool 6 — Hazelcast Jet

Hazelcast Jet is a distributed stream processing engine designed for low-latency, in-memory processing. It is often used for real-time pipelines where fast computation and simpler operational patterns are priorities.

Key Features

- Distributed stream processing with low latency

- Stateful processing and windowing support (Varies)

- In-memory acceleration patterns (Varies)

- Integration with Hazelcast ecosystem (Varies)

- Scalable pipeline execution

- SQL and processing capabilities (Varies)

- Support for event transformations and enrichment

Pros

- Strong low-latency processing focus

- Practical for real-time enrichment and transformations

- Useful when in-memory performance is important

Cons

- Ecosystem breadth smaller than Flink and Spark

- Best fit often depends on Hazelcast adoption

- Feature depth varies by edition and deployment model

Platforms / Deployment

- Linux / Windows (Varies) / macOS (Varies)

- Cloud / Self-hosted / Hybrid (Varies)

Security & Compliance

- Not publicly stated

Integrations & Ecosystem

Hazelcast Jet integrates well within Hazelcast-centric stacks and supports streaming pipelines that require quick computation.

- Integrations with common event sources (Varies)

- Connectors for storage and messaging systems (Varies)

- APIs for custom pipeline stages

- Monitoring and cluster management options (Varies)

- Useful for operational data flows and enrichment

Support & Community

Community and vendor support options exist; adoption is often strongest in Hazelcast-oriented environments.

Tool 7 — RisingWave

RisingWave is a streaming database designed for continuous queries and materialized views over event streams. It is often used when teams want streaming SQL with durable state and easier consumption by downstream apps.

Key Features

- Continuous SQL queries over streaming data (Varies)

- Materialized views for real-time results (Varies)

- Stateful processing and windowing patterns (Varies)

- Integration with streaming sources (Varies)

- Built-in storage for streaming state (Varies)

- Supports event enrichment and transformations (Varies)

- Designed for real-time analytics workloads

Pros

- SQL-first approach can reduce engineering friction

- Materialized views simplify downstream consumption

- Strong fit for real-time analytics and derived streams

Cons

- Ecosystem maturity varies compared to older frameworks

- Operational characteristics depend on deployment choices

- Feature coverage depends on edition and setup

Platforms / Deployment

- Linux (common)

- Cloud / Self-hosted / Hybrid (Varies)

Security & Compliance

- Not publicly stated

Integrations & Ecosystem

RisingWave is typically used for streaming analytics patterns where continuous SQL produces ready-to-use outputs.

- Integration with streaming sources (Varies)

- SQL access for BI and application queries (Varies)

- Connectors for sinks and destinations (Varies)

- APIs and drivers for integration (Varies)

- Monitoring and operations tooling (Varies)

Support & Community

Growing community and documentation; support depends on edition and vendor involvement.

Tool 8 — Materialize

Materialize is designed for streaming SQL with incremental computation, letting teams build real-time views that stay updated as events arrive. It is used for operational analytics and reactive applications.

Key Features

- Streaming SQL for continuous, incremental updates (Varies)

- Materialized views that stay current automatically

- Joins, aggregations, and transformations over streams (Varies)

- Integration with streaming sources (Varies)

- Outputs that can be queried by apps and BI tools (Varies)

- Stateful processing handled under the hood (Varies)

- Designed for low-latency operational analytics

Pros

- Simplifies real-time pipelines for SQL-friendly teams

- Incremental updates reduce recomputation overhead

- Good fit for reactive apps and operational dashboards

Cons

- Ecosystem maturity differs from long-established frameworks

- Feature specifics depend on deployment and edition

- Best fit depends on data patterns and query design

Platforms / Deployment

- Linux (common) / Web access (Varies)

- Cloud / Self-hosted / Hybrid (Varies)

Security & Compliance

- Not publicly stated

Integrations & Ecosystem

Materialize is often used as a streaming serving layer that outputs ready-to-query views for applications and dashboards.

- Integration with event streaming sources (Varies)

- SQL access patterns for downstream consumers (Varies)

- Connectors for outputs and sinks (Varies)

- APIs and drivers for application integration (Varies)

- Works well in event-driven architectures

Support & Community

Growing community and vendor support options; operational best practices are improving over time.

Tool 9 — ksqlDB

ksqlDB provides streaming SQL for Kafka, allowing teams to build stream transformations and materialized tables without writing full applications. It is often used for rapid development of streaming pipelines by SQL-capable teams.

Key Features

- SQL-based stream processing on Kafka topics

- Stream and table abstractions with joins (Varies)

- Windowed aggregations and filtering

- Materialized state for continuous results (Varies)

- Tight integration with Kafka ecosystems (Varies)

- Rapid development for stream transformations

- Operational controls and monitoring options (Varies)

Pros

- Low barrier for SQL-capable teams to build streaming logic

- Strong fit for Kafka-centric environments

- Fast iteration for common streaming ETL patterns

Cons

- Best fit is Kafka-centric; portability is lower

- Complex logic can outgrow SQL-only approaches

- Operational scaling depends on environment and usage

Platforms / Deployment

- Linux (common)

- Cloud / Self-hosted / Hybrid (Varies)

Security & Compliance

- Not publicly stated

Integrations & Ecosystem

ksqlDB fits streaming architectures where Kafka is the backbone and SQL-based transformations simplify pipeline development.

- Kafka topic integration for inputs and outputs

- Schema governance alignment patterns (Varies)

- Integration with connectors through Kafka ecosystem (Varies)

- Monitoring and management tooling (Varies)

- Useful for building derived streams and tables quickly

Support & Community

Community and vendor support depend on the Kafka ecosystem and how ksqlDB is deployed.

Tool 10 — Google Cloud Dataflow

Google Cloud Dataflow is a managed service for running Apache Beam pipelines. It is used when teams want streaming and batch processing with managed operations, especially within Google Cloud environments.

Key Features

- Managed execution of streaming and batch pipelines

- Apache Beam model support for portability (Varies)

- Autoscaling and managed operations (Varies)

- Windowing and event-time processing semantics

- Integration with Google Cloud services (Varies)

- Monitoring and pipeline health tooling (Varies)

- Reliable fault-tolerant processing patterns (Varies)

Pros

- Managed operations reduce infrastructure overhead

- Strong for event-time and complex windowing use cases

- Good fit for Google Cloud-centric architectures

Cons

- Cloud-specific operational patterns can reduce portability in practice

- Costs require careful monitoring for always-on pipelines

- Debugging depends on platform tooling and pipeline design

Platforms / Deployment

- Web (via cloud tooling)

- Cloud

Security & Compliance

- Not publicly stated

Integrations & Ecosystem

Dataflow integrates closely with Google Cloud services and supports building end-to-end streaming pipelines in that ecosystem.

- Integration with Google Cloud ingestion and storage (Varies)

- Pipeline monitoring and operations tooling (Varies)

- SDK and API support for automation (Varies)

- Connector patterns through Beam and platform tooling (Varies)

- Suitable for streaming ETL and event processing pipelines

Support & Community

Strong documentation and cloud support plans; community support is tied to Apache Beam and Google Cloud user bases.

Comparison Table (Top 10)

| Tool Name | Best For | Platform(s) Supported | Deployment (Cloud/Self-hosted/Hybrid) | Standout Feature | Public Rating |

|---|---|---|---|---|---|

| Apache Flink | Large-scale stateful stream processing | Linux (common) | Self-hosted / Hybrid | Strong event-time and stateful processing | N/A |

| Apache Spark Structured Streaming | Unified batch plus streaming on Spark | Linux (common) / Windows (Varies) | Cloud / Self-hosted / Hybrid | Reuse Spark skills for streaming pipelines | N/A |

| Apache Beam | Portable batch and streaming pipelines | Varies / N/A | Cloud / Self-hosted / Hybrid | Runner portability with rich windowing semantics | N/A |

| Kafka Streams | Application-embedded processing on Kafka | Linux / Windows (Varies) / macOS (Varies) | Cloud / Self-hosted / Hybrid | Simple deployment inside microservices | N/A |

| Apache Samza | Partition-based stream processing pipelines | Linux (common) | Self-hosted / Hybrid | Reliable processing in partitioned models | N/A |

| Hazelcast Jet | Low-latency in-memory stream processing | Linux / Windows (Varies) / macOS (Varies) | Cloud / Self-hosted / Hybrid | In-memory acceleration patterns | N/A |

| RisingWave | Streaming SQL with materialized results | Linux (common) | Cloud / Self-hosted / Hybrid | Continuous queries and materialized views | N/A |

| Materialize | Incremental streaming SQL views | Linux (common) | Cloud / Self-hosted / Hybrid | Incremental computation for real-time views | N/A |

| ksqlDB | SQL-based streaming on Kafka | Linux (common) | Cloud / Self-hosted / Hybrid | Rapid stream transformations using SQL | N/A |

| Google Cloud Dataflow | Managed Beam pipelines in cloud | Web (via tooling) | Cloud | Managed scaling and operations for Beam | N/A |

Evaluation & Scoring of Stream Processing Frameworks

Weights used: Core features 25%, Ease of use 15%, Integrations & ecosystem 15%, Security & compliance 10%, Performance & reliability 10%, Support & community 10%, Price / value 15%. Scores are comparative across typical stream processing scenarios and should be validated by a pilot that measures latency, correctness, checkpoint behavior, state growth, and operational workload.

| Tool Name | Core (25%) | Ease (15%) | Integrations (15%) | Security (10%) | Performance (10%) | Support (10%) | Value (15%) | Weighted Total (0–10) |

|---|---|---|---|---|---|---|---|---|

| Apache Flink | 9 | 6 | 8 | 5 | 9 | 8 | 8 | 7.85 |

| Apache Spark Structured Streaming | 8 | 7 | 8 | 5 | 8 | 9 | 8 | 7.70 |

| Apache Beam | 8 | 6 | 7 | 5 | 7 | 8 | 8 | 7.20 |

| Kafka Streams | 7 | 8 | 8 | 5 | 7 | 8 | 8 | 7.35 |

| Apache Samza | 7 | 6 | 6 | 5 | 7 | 6 | 8 | 6.60 |

| Hazelcast Jet | 7 | 7 | 6 | 5 | 8 | 7 | 7 | 6.95 |

| RisingWave | 7 | 8 | 6 | 5 | 7 | 6 | 7 | 6.75 |

| Materialize | 7 | 8 | 6 | 5 | 7 | 6 | 6 | 6.60 |

| ksqlDB | 6 | 8 | 7 | 5 | 6 | 7 | 7 | 6.70 |

| Google Cloud Dataflow | 8 | 7 | 7 | 6 | 8 | 7 | 6 | 7.15 |

How to interpret the scores

- Use Weighted Total to shortlist options, not to declare a single winner.

- If your main need is complex stateful processing, weight Core and Performance more heavily.

- If your team needs quick adoption, weight Ease and Ecosystem more heavily.

- Always validate with a pilot that reflects your real event volume, state size, and recovery requirements.

Which Stream Processing Framework Is Right for You?

Solo / Freelancer

If you need streaming for a small project, choose a framework that minimizes operational overhead. Kafka Streams is practical when you are already using Kafka and want to deploy processing inside an application. ksqlDB is useful for SQL-driven transformations without building full services. If you are in a cloud-native environment and want managed operations, Google Cloud Dataflow can reduce infrastructure work, but it depends on your cloud commitment.

SMB

SMBs typically need reliable pipelines without a large platform team. Kafka Streams can work well for microservice-driven architectures with moderate complexity. Apache Spark Structured Streaming is a good fit if your team already uses Spark for ETL and wants to extend into streaming. ksqlDB can accelerate common transformation use cases when your pipelines are Kafka-centric and SQL-capable teams are driving delivery.

Mid-Market

Mid-market organizations often need better governance, higher throughput, and stronger operational patterns. Apache Flink is a strong choice when you need stateful processing, event-time semantics, and low latency at scale. Apache Spark Structured Streaming works well when you want a unified batch plus streaming approach and have Spark skills available. Apache Beam can be useful if you want a standardized pipeline model that can run on multiple backends, but your chosen runner will define operational reality.

Enterprise

Enterprises typically prioritize reliability, standardization, security controls, and multi-team operations. Apache Flink is often a strong foundation for enterprise streaming programs with complex processing. Apache Spark Structured Streaming fits where Spark is already the standard for data processing. Google Cloud Dataflow is a strong option for enterprises deeply committed to Google Cloud that want managed stream processing operations. Kafka Streams is effective for domain-oriented teams building event-driven services, while streaming SQL tools like ksqlDB can support rapid transformations when governance is clear.

Budget vs Premium

Open-source frameworks like Apache Flink, Apache Spark Structured Streaming, Apache Beam, Kafka Streams, and Apache Samza can reduce licensing costs but require infrastructure and platform ownership. Managed services like Google Cloud Dataflow can increase service spend while reducing staffing overhead for operations. SQL-first streaming databases like RisingWave and Materialize can reduce engineering effort for some use cases, but platform choice should consider operational maturity and long-term cost.

Feature Depth vs Ease of Use

If you need deep event-time semantics, complex windowing, and robust stateful processing, Apache Flink and Apache Beam provide strong foundations. If you need ease and a familiar model, Apache Spark Structured Streaming can reduce learning friction for Spark teams. Kafka Streams and ksqlDB can be very approachable in Kafka-centric environments, especially for domain teams. Materialize and RisingWave can be easier for SQL-first teams that want streaming results without building large pipeline codebases.

Integrations & Scalability

Apache Flink and Apache Spark Structured Streaming offer broad integration patterns with event streaming systems and storage layers, but require careful operational design. Kafka Streams and ksqlDB integrate tightly with Kafka, making them strong when Kafka is the event backbone. Apache Beam provides portability, but integration strength depends on the chosen runner. Google Cloud Dataflow integrates strongly with Google Cloud services, which is excellent for that ecosystem but less portable across clouds.

Security & Compliance Needs

Stream processing often touches sensitive customer and operational data. Define baseline requirements early: authentication, authorization, encryption expectations, audit visibility, and data retention rules. Avoid assuming compliance claims and confirm through your standard review process. Also consider how state is stored, where checkpoints live, and whether access to state and logs is governed. Security is as much about pipeline ownership and governance as it is about technical settings.

Frequently Asked Questions (FAQs)

1. What is stream processing compared to event streaming?

Event streaming moves events reliably from producers to consumers, while stream processing transforms and analyzes those events in motion. Most modern systems use both together.

2. Do I always need exactly-once guarantees?

Not always. Many real systems rely on at-least-once processing with idempotent consumers and deduplication. Exactly-once patterns can be valuable but must be validated end-to-end in your architecture.

3. How do I choose between Flink and Spark Structured Streaming?

Choose Flink when you need low-latency event-time processing and complex stateful logic. Choose Spark Structured Streaming when your team already uses Spark heavily and you want a unified batch plus streaming model.

4. What is stateful processing and why does it matter?

Stateful processing means the framework can remember information across events, such as counts, sessions, rolling metrics, or deduplication keys. It enables richer real-time applications beyond simple filtering.

5. What are windows in stream processing?

Windows group events over time or count intervals, such as per minute or per session. They are essential for aggregation like rolling metrics, session analytics, and time-based alerts.

6. What should I monitor in production streaming pipelines?

Monitor input lag, throughput, error rates, checkpoint health, state size, backpressure, and restart frequency. Also monitor output correctness signals and data quality checks.

7. Can stream processing support real-time machine learning features?

Yes. Many teams use stream processing to compute features in real time, join events with reference data, and deliver feature values to applications or online stores. Data quality and latency are key.

8. Is streaming SQL enough for most teams?

Streaming SQL can cover many transformation and aggregation use cases, especially for analytics-oriented teams. However, complex business logic, custom enrichment, or specialized patterns may require full programming frameworks.

9. How do I keep streaming pipelines reliable during upgrades?

Use staged rollouts, compatibility testing, and clear rollback plans. Validate checkpoint compatibility and state migration behavior. Run chaos-style tests to confirm recovery works under failures.

10. What is a safe way to pilot a stream processing framework?

Pick one high-value use case, define latency and correctness targets, ingest realistic event volume, and build a small pipeline with monitoring and alerts. Measure recovery behavior, state growth, and operational workload before scaling.

Conclusion

Stream processing frameworks turn continuous event flows into useful outcomes like enriched events, real-time metrics, alerts, and reactive application behavior. The best framework depends on your latency targets, state complexity, team skills, and operational maturity. Apache Flink stands out for complex stateful processing and event-time workloads, while Apache Spark Structured Streaming fits well for teams already standardized on Spark and wanting a unified model. Apache Beam is valuable when you want a consistent pipeline model with runner flexibility, while Kafka Streams and ksqlDB are practical for Kafka-centric teams that prefer application-style deployment or SQL-based transformations. Streaming SQL databases like Materialize and RisingWave can reduce friction for SQL-first teams that want continuously updated views. A practical next step is to shortlist two or three options, run a pilot with real event data, validate correctness and recovery under failure, and standardize monitoring and governance before expanding to more pipelines.

Best Cardiac Hospitals Near You

Discover top heart hospitals, cardiology centers & cardiac care services by city.

Advanced Heart Care • Trusted Hospitals • Expert Teams

View Best Hospitals